The Story: Two Audit Seasons, One Difference

Last year, your team burned weeks assembling evidence, reconciling spreadsheets, and explaining “what really happened” to auditors.

Next year, you either repeat that…

Or you walk in with live dashboards, pre-packaged evidence, and a clear story of how AI gives you continuous assurance, not last-minute chaos.

The difference isn’t a 5-year roadmap.

It’s what you do in the next 90 days.

Here’s the plan → simple, pointed, and executable.

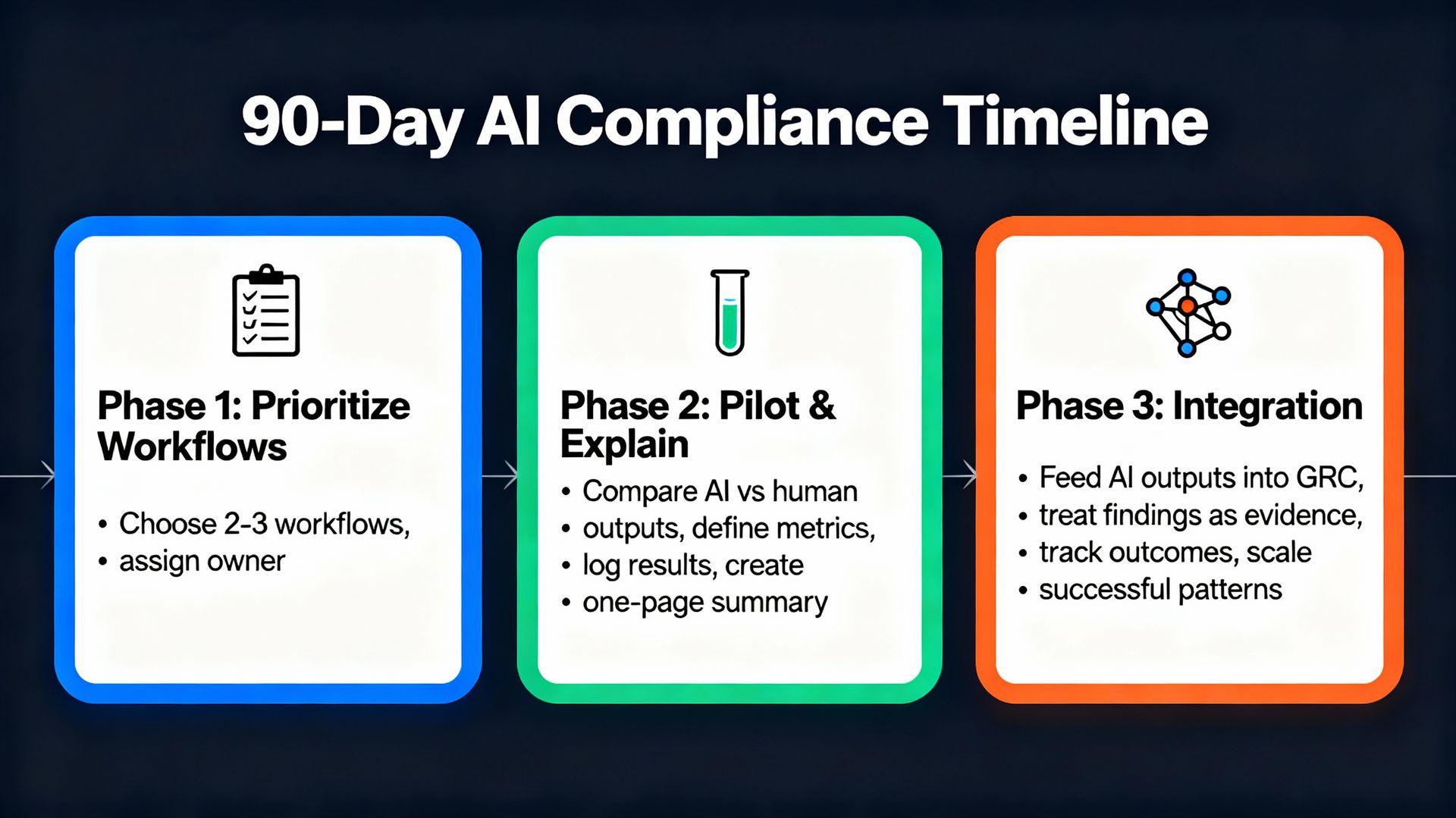

Phase 1 (Days 1–30): Decide Where AI Actually Works

Objective: Focus. Stop talking about “AI in GRC” in general and pick a few battles to win.

Pick 2–3 painful workflows

Examples: evidence collection for SOC 2/ISO, access reviews, policy–regulation mapping.

For each, write down:

Hours/month spent

Number of people involved

Typical delays and failure points

Define what “better” means:

Time cut in half, fewer escalations, cleaner evidence trail, fewer repeat findings.

You’re not transforming the universe. You’re choosing where AI must earn its keep.

Phase 2 (Days 31–60): Pilot, Measure, Explain

Objective: Prove AI helps and that you can defend how it works.

Plug AI into those workflows to:

Draft comparisons (policy ↔ regulation).

Pull and label evidence from source systems.

Cluster/score risks, incidents, or exceptions.

Draw a hard line between:

AI suggestions vs. human decisions.

Capture before/after metrics:

Cycle time, manual steps removed, issues caught earlier.

Log everything:

Inputs, outputs, overrides, rationale.

Create a one-page “How AI is used in this process” you’d be comfortable showing a regulator.

If you can’t explain it in plain language, it’s not ready.

Phase 3 (Days 61–90): Wire It Into Governance

Objective: Stop treating AI as a side experiment and make it part of your GRC system.

Feed AI outputs into your GRC platform as:

Risks, issues, control test results, evidence objects.

Treat AI findings as first-class evidence:

Track how often they’re confirmed or overridden by humans.

Record why they’re overridden.

Tune based on reality:

Reduce noise where AI over-flags.

Tighten thresholds where it misses obvious issues.

Decide which pattern to scale next:

Once one workflow is stable and explainable, extend the same pattern to others.

Now AI isn’t a toy. It shows up in the same place as everything else you’re accountable for.

What You Do This Week

Name your 2–3 high outcome yield workflows.

Assign one owner for the 90-day AI compliance push.

Schedule a 30-minute check-in with your leadership team in 30 days to show:

Where you’re using AI

How it’s governed and controlled

The concrete risk reduction and efficiency gains

That’s it.

Every control tells a story. Share yours and continue the dialogue with me on LinkedIn.

Stay Ahead in GRC

Never miss an update in the Governance, Risk, and Compliance (GRC) domain. Follow below newsletter to get expert insights, trends, and actionable strategies delivered straight to your inbox.

👉 Check out the featured newsletter below: